Let’s imagine that QA department, QA management has the task to perform API testing of a web component. And from now on, numerous questions arise: from – what exactly should be tested? to – what components should be tested? The functional part? Usability?

Further, we will analyze the peculiarities of such a type of testing and also will learn how to design easy test cases for performing the functional testing of any API.

We will use the scheme of virtual SOAP API of a state project with quite complicated and complex logic as an example.

We will thoroughly analyze how to properly create tests, what is the best way to document them, and also, what exactly should we pay our attention to.

Good news

There are 3 good things in working with any API:

- In the process of developing the API, a block of technical documentation is always created, which helps the third-party developers to use the created product for their needs. In other words, we should prepare a group of test checks at the stage of creating the technical assignment, which will help to study the specifics of the future functionality more thoroughly and also find some inconsistencies until the product will have been completed;

- For all SOAP services, there are special data schemes (XSD): the validation of a query to a particular scheme significantly simplifies the life of QA specialist. The main task after the scheme has been released is to check whether the scheme reflects the technical assignment. If the logic of the scheme does not meet the terms of the technical assignment, the group of functional tests will equal 0 since such a scheme will be edited and checked one more time;

- Usually, the functionality created in API partly reflects the interface of the developed product so we can manage the visualization of what and how should be displayed in the interface: additionally, we can find the errors in UI since testing of the functionality is performed from the other perspective.

Preparatory work for creating API test design

While developing the basic test framework, we can use the schemes and mind-maps.

Usually, the combination of these two visualizations is used. It looks like this:

The Combination of Schemes and Mind-Maps

Let’s imagine that we have a task to test an ordinary service of uploading the information about the employees to the system database, so that the company will have a possibility to give the employees unique password and login for creating an account.

For such a service, there are data import operation, complete export (with the function of giving the unique ID and password) and also a shortened form of export which can be easily used by all employees: they can download their login, contact details, position and responsibilities.

A direct interaction with API is fraught with the fact that all fields, which are transmitted through dsdl, are available here but not all of them can be edited (filled) (for example, in the process of exporting, an employee shouldn’t have the possibility to download the information about other employees).

In our case, we should check:

- Boundary values for a sum of fields (for example, one employee may have 2 phone numbers and doesn’t have a middle name.

- Limit to every field in xsd;

- Boundary values for the data inside the fields (in this case, we should perform testing in accordance with the limits specified in a technical assignment; and also, the proper implementation of the scheme in back-end part, for all errors of a format to be displayed, not only system errors);

- Authorized verification (all possible checks of access rights). For example, the function of complete export and import can be used only by an employee with particular rights (of admin). On the UI part, such tests are considered as unclear; if there are no rights, a user doesn’t see a special menu or button;

- Functional bugs: if information about work was changed during the particular time interval, then we should check whether a user was in a system (to not multiply the entities): if the employee’s questionnaire has non-editable fields (for example, a nickname), we should check that a system properly identifies it and also displays a message that the fields can’t be changed while their editing;

- While we are creating and editing the information about accounts, we should check that the information on UI is correctly filled and displayed (if we have it, of course).

Fundamental principles

It’s a bad practice to divide the principles into positive and negative since the messages why a user can’t perform a particular action are as important as a successful import/export. And still, it’s a good practice to primarily divide the tests into successful execution of established script and only then – into finding the particular error messages.

Also, it’s very convenient and good to divide the tests according to different variation of the usage: first, the importing process, then export (as an admin, as an ordinary user).

While creating the documentation according to the results of test design development, we can use the following sequence of tests:

- Format control;

- Fundamental testing of a successful import;

- Multiple imports (infinite number of entities);

- Business bugs and errors of integration structure (the ones which can’t appear in user interface since the form has a fixed number and value of fields and an employee has system access only to a particular group of operations);

- Checking the authorization control;

- Testing a successful import;

- Multiple exports;

- Testing the messages in the case of export errors (for example, if there is no information; there are no access rights).

Export should be always tested after import since it can save time for development of various data which help to check the downloading of the current entities.

Test creation

Now we should pay our attention to the process of writing the tests. You should follow the following rules:

1. The text of a technical assignment should be permanently edited while creating the tests.

- First, simple copying of a technical assignment won’t allow to fix a sufficient number of tests for your product;

- Second, there is a risk to miss something created by developers while developing the logic of work of the system functionality;

- Third, when you add all tests to one table, use not common expressions from the text of a technical assignment, but the name of particular tags and operations (for example, create an employee, fill the field with the name, edit the phone number).

2. Write an expected result next to the tests.

- Everything should be briefly and clearly written since the simple expression “executing the script 1 from a basic algorithm” won’t be clear for a person who sees these test for the first time;

- If it’s expected that an import is successful, then you should mention this. If you need to check the logic of filling the fields on UI or export while doing this – then specify this too;

- If a particular message is expected, you don’t need to write its id/number, especially in the case when you don’t know exactly who will use this list for performing testing. Compare the expressions – “the message 060 appears” or “you don’t have enough rights to do this operation.” So in this way, you don’t just check the appearance of the message but also the correctness of its implementation.

3. Take into account who and when will use your document for testing.

- Will it be the newcomers who have recently studied the basics of the functionality of your product? Then you should write the tests more thoroughly since they may have problems before they start executing a first test;

- Will it be experienced employees who are able to write such a material by themselves? Then use only those terms that are familiar to them;

- If it happens that tests are made only for you, not to miss anything in tests, think when exactly this functionality will be implemented. Our brains are a very cunning and clever thing which never saves unnecessary information. If you are not sure that tests will be performed today or tomorrow, then write everything in detail, to not meet the situation when in a year or more, you will study the content of a technical assignment once again and think what you wanted to say there.

4. Add a link to a technical assignment of this functionality to these tests and don’t forget to permanently check whether it is changed or not.

5. If after you finished writing the test, some time passed and the part of the functionality was implemented (in particular, this greatly influences the process of testing the improvements which improve the already developed functionality), you should spend some time on rewriting the tests. Any, even the most refined and clever, documentation have the quality to become outdated. And this is normal. But you don’t need to edit it while performing the tests – you can waste precious time until you find the reason why the system doesn’t function as expected half a year ago: also, you can lose some important tests and miss visible bugs.

6. Don’t spend much time on transforming some tests into chains. This will impede the work form the beginning if you find the blocked defects;

7. If you have a task to check a particular value or a combination of some parameters, don’t keep a standard form of check-list but add these data to a table.

8. Divide the text into tests in such a way that you spend up to 5 minutes with together with preparation of all test components. Don’t perform testing which means testing more than 1 component. It’s better when you have 10-20 tests but they are absolutely ready and easy;

9. If you have a task to perform testing of multiple imports, think how to make the preparation of the data easier and mention this inside test documentation.

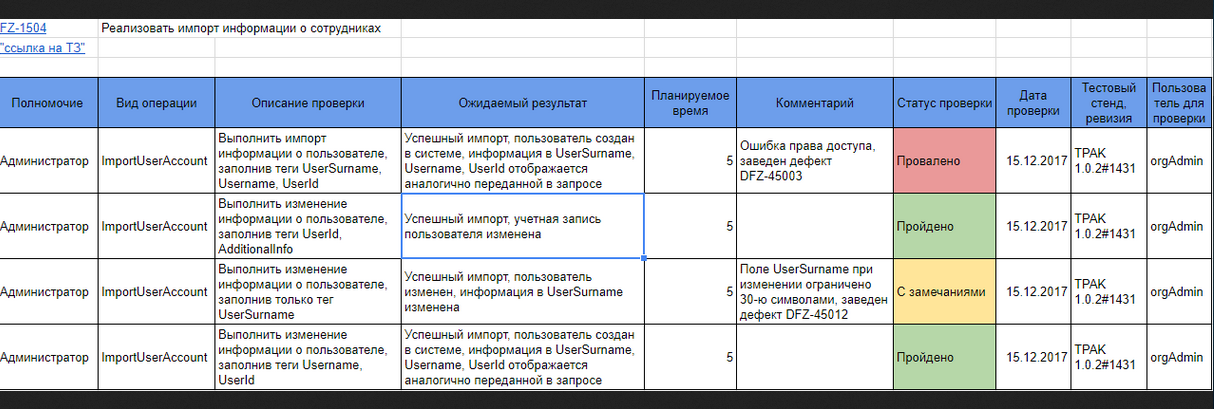

Common way of writing the results of API test design

We have the list of fields which should be in test design:

- Function of a user;

- Verification;

- Expected result;

- Planned time interval;

- Status of a test;

- Date of a test;

- Revision, a test board;

- Client for testing.

In practice, it looks like this:

The List of Test Design Fields

Sometimes it happens that some part of tests needs multiple reproduction (the bugs are based on them or related tests). In this case, it’s more efficient to edit the date of a previous test than to write it next to it.

Conclusion

The process of API testing is constantly and inherently connected with checking the available documentation and designing the test cases. This should not be neglected since there is a big risk that the most important client scripts won’t be tested completely.

Leave A Comment